Initial Configuration of CubeBackup for Google Workspace using Docker

This article will guide you through the initial configuration of CubeBackup in a docker container. If you are using CubeBackup on Windows or Linux directly, please refer to Initial Configuration on Windows or Initial Configuration on Linux.

Detailed instructions on how to launch a CubeBackup docker container can be found here: Install CubeBackup using a Docker container .

Step 1. Open the CubeBackup web console

After the docker container has been successfully launched, point your browser to http://<host_IP>:<port> for the initial configuration.

Tips:

- The web console can be accessed using the above URL through any browser within your network.

- If permitted by your company's firewall policy, it can also be accessed from outside your network at http://<hostmachine_external_ip>:<port>

Step 2. Set backup location

CubeBackup allows you to backup Google Workspace data to either on-premises storage or your private cloud storage. Currently, CubeBackup supports backing up to a local disk, NAS/SAN, Amazon S3 storage, Google Cloud storage, Microsoft Azure Blob storage, and Amazon S3-compatible storage, please click the corresponding tab for detailed instructions.

Note: Be sure to set the backup and data index locations in the web console to the docker container paths - not the host paths!

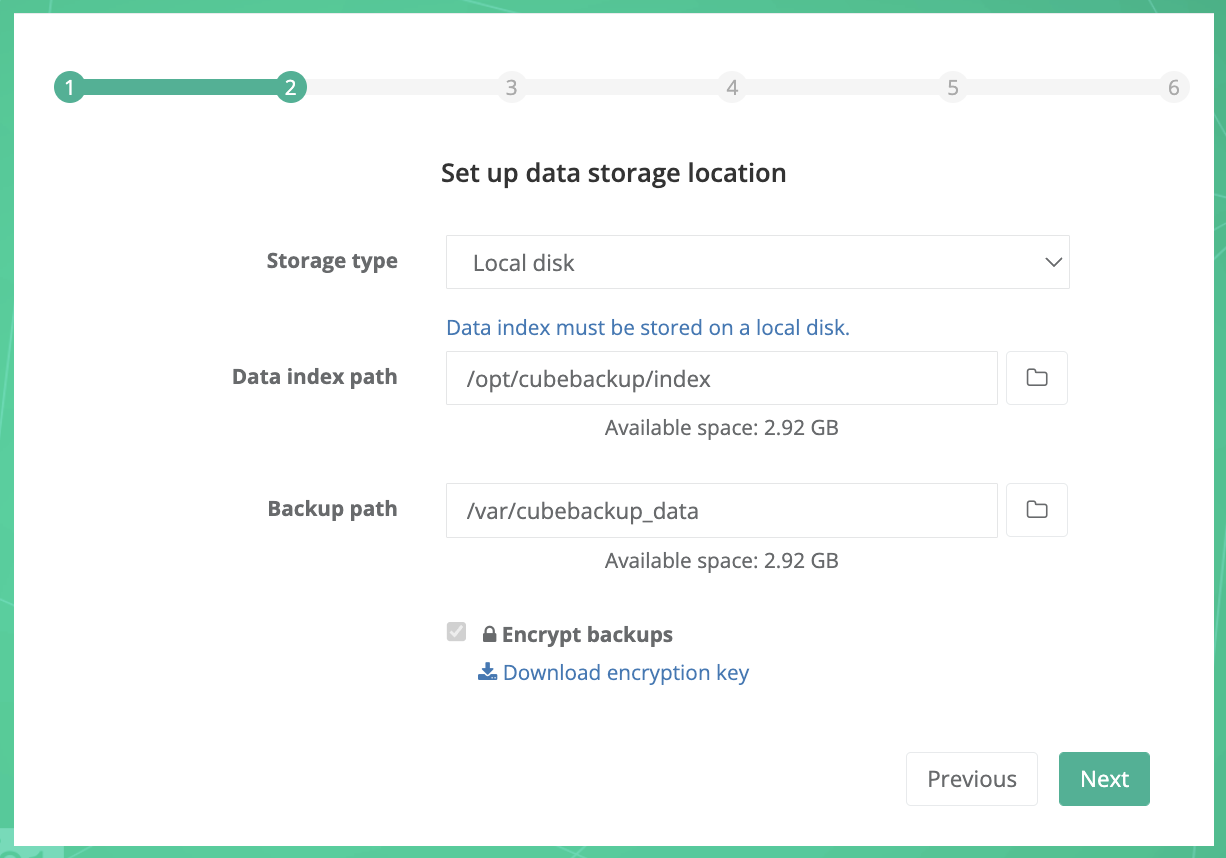

Storage type: Select "Local disk" from the dropdown list.

Data index path: Select the container path for the data index. By default, it is /cubebackup_index.

Note: Data index is the metadata for the backups, and its accessing speed is crucially important for the performance of the backups. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Backup path: Select the container path for the backup data. By default, it is /cubebackup_data.

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

Note: If you plan to back up your Google Workspace data to mounted network storage, we strongly recommend storing the data index on an SSD on your local server, and be sure to keep a stable network connection with the NAS to avoid interrupting the backup process, which might result in corrupted files in your backup repository. This storage type is not as stable as cloud storage, please use at your own risk.

Storage type: Select "Mounted network storage" from the dropdown list.

Data index path: Select the container path for the data index. By default, it is /cubebackup_index.

Note: Data index is the metadata for the backups, and its accessing speed is crucially important for the performance of the backups. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Network storage path: Select the container path for the backup data. By default, it is /cubebackup_data.

Note: Select the path in the docker container here, not the actual network storage path. For example, if the network storage has been mounted to the host machine as /mnt/nas, and you have started the docker using this command:

docker run -d -P -v /mnt/nas/gsuite-backup:/cubebackup_data cubebackup/workspacethen you should select /cubebackup_data here.

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

Note: If you plan to back up your Google Workspace data to Amazon S3 storage, we strongly recommend running CubeBackup on an AWS EC2 instance (e.g. t3.large instance) instead of a local server. Hosting both the backup server and storage on AWS will avoid the bottleneck of all data moving through your local server and greatly improve backup speeds.

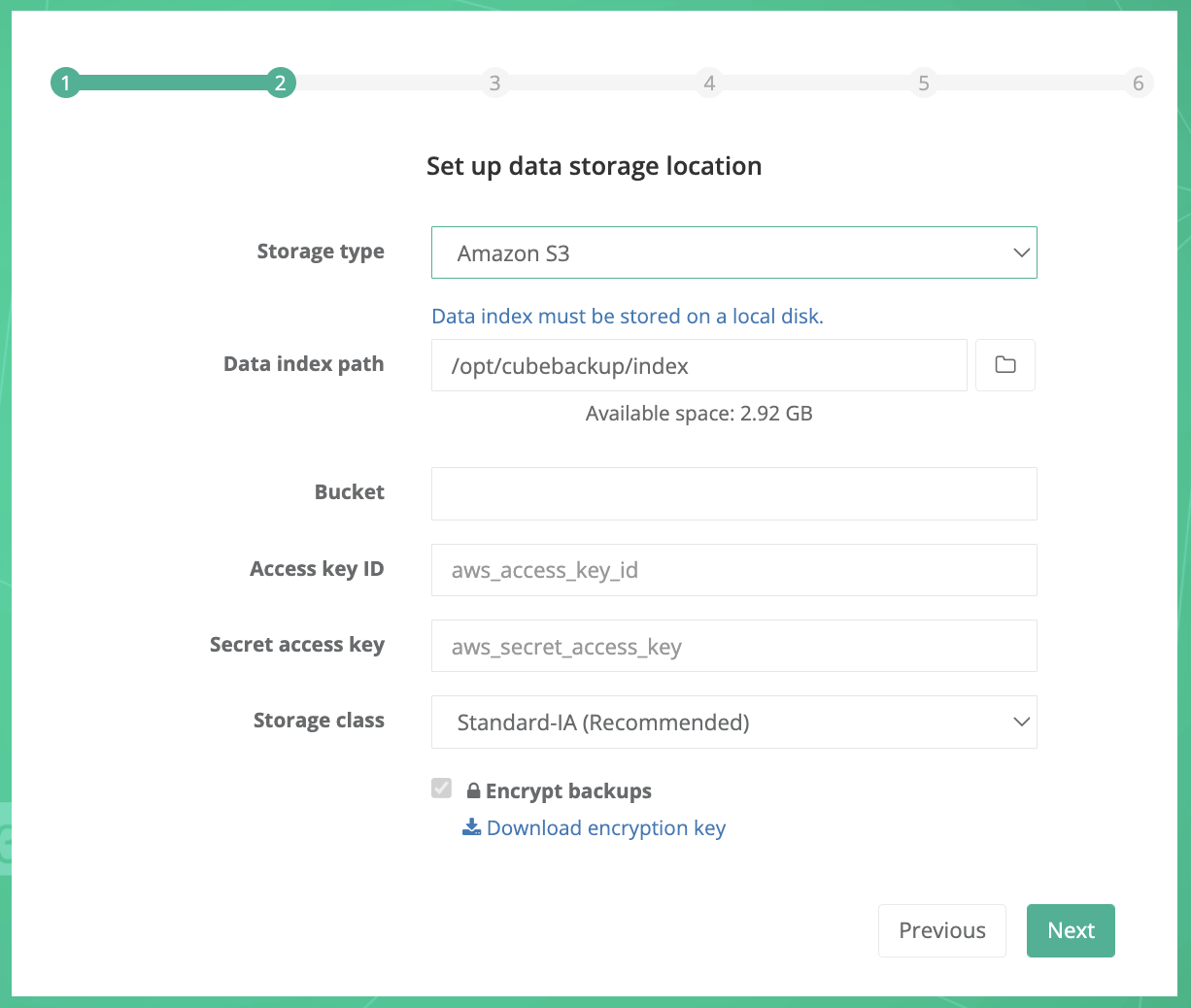

Storage type: Select Amazon S3 from the dropdown list.

Data index path: Select a local directory to store metadata for your backup.

Note: Data index is the metadata for the backups, and its accessing speed is crucially important for the performance of the backups. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Bucket: The unique name of your S3 storage bucket.

Access key ID: The AWS IAM access key ID to authorize access to data in your S3 bucket.

Secret access key: The secret of your AWS IAM access key.

Please follow the instructions below to create and configure a private Amazon S3 bucket for your backup data.

- Create an AWS account

If your company has never used Amazon Web Services (AWS) before, you will need to create an AWS account. Please visit Amazon Web Services (AWS) , click the Create an AWS Account button, and follow the instructions.

If you already have an AWS account, you can sign in directly using your account.

- Create an Amazon S3 bucket

Amazon S3 (Amazon Simple Storage Service) is one of the most-widely used cloud storage services in the world. It has been proven to be secure, cost-effective, and reliable.

To create your S3 bucket for Google Workspace backup data, please follow the brief instructions below.

- Open the Amazon S3 console using an AWS account.

- Click Create bucket.

- On the Create bucket page, enter a valid and unique Bucket Name.

- Leave the other available options as they are.

Note:

1. Since CubeBackup already has a version control and will overwrite index files frequently, enabling Bucket Versioning or Advanced settings > Object Lock in the S3 bucket will result in unnecessary file duplication and cost. We recommend leaving these two features disabled.

2. Depending on your company policies, you can change the other default configurations (Block Public Access, Default encryption, etc.). These options will not affect the functioning of CubeBackup. - Ensure that your S3 settings are correct, then click Create bucket.

- Go back to the CubeBackup setup wizard and enter the name of this newly-created bucket.

- Open the Amazon S3 console using an AWS account.

- Create an IAM account

AWS IAM (Identity and Access Management) is a web service that helps you securely control access to AWS resources. Follow the brief instructions below to create an IAM account for CubeBackup and grant access to your S3 bucket.

- Open the AWS IAM console .

- Select Users from the left panel and click Create user.

- Enter a valid User name (e.g. CubeBackup-S3), leave the option "Provide user access to the AWS Management Console" unchecked, and click Next.

- In the Set permissions page, Select Attach policies directly under Permissions options.

- Search for the AmazonS3FullAccess policy and check the box in front of it. You can leave the Set permissions boundary option as default.

Tip: Instead of using the "AmazonS3FullAccess" policy, you can also create an IAM account with permissions to the specific S3 bucket for CubeBackup only .

- Click Next, review your IAM user settings, then click Create user.

- Click the name of the newly-created user in the list.

- Choose the Security credentials tab on the user detail page. In the Access keys section, click Create access key.

- On the Access key best practices & alternatives page, choose Application running outside AWS, then click Next.

- Set a description tag value for the access key if you wish. Then click Create access key.

- On the Retrieve access keys page, click Show to reveal the value of your secret access key. Copy the Access key and Secret access key values to the corresponding textboxes in the CubeBackup setup wizard.

Storage class: Select an Amazon S3 storage class for the backup data. Standard-IA or One Zone-IA is recommended.

For more information about Amazon S3 storage classes, please visit AWS Storage classes . You can find the pricing details for the different S3 storage classes at S3 pricing .

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

Note: If you plan to back up your Google Workspace data to Google Cloud storage, we strongly recommend running CubeBackup on a Google Compute Engine VM (e.g. e2-standard-2 VM) instead of a local server. Hosting both the backup server and storage on Google Cloud will avoid the bottleneck of all data moving through your local server and greatly improve backup speeds.

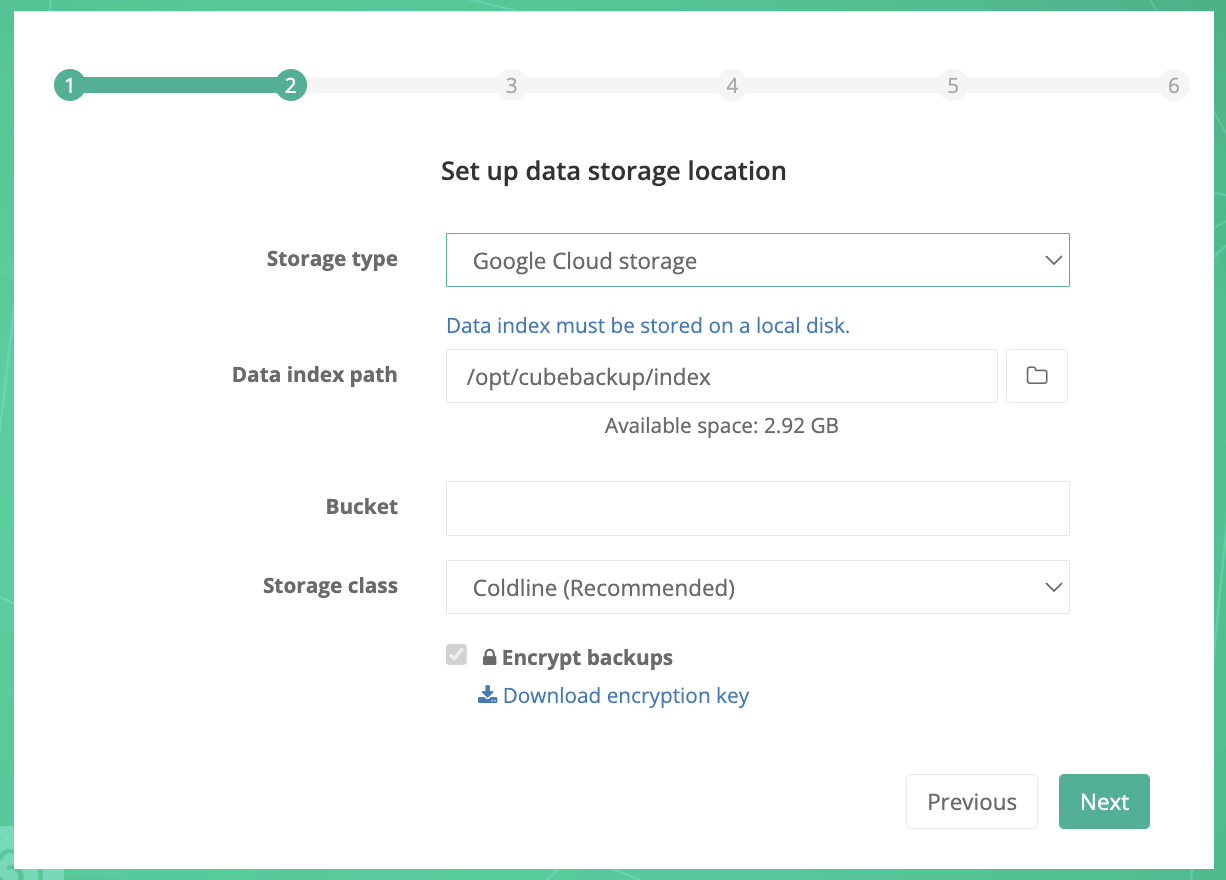

Storage type: Select Google Cloud storage from the dropdown list.

Data index path: Select a local directory to store metadata for your backup.

Note: Since the data index contains the metadata for the backups, access speed is crucially important for the performance of CubeBackup. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Bucket: Before you can backup data to Google Cloud storage, you will first need to create and configure a private Google Cloud Storage bucket.

Storage class: The storage class for the backup data. Coldline is recommended. For more information about Google Cloud storage classes, please visit Storage classes . You can find the pricing details for the different Google Cloud storage classes at Cloud Storage Pricing .

Please follow the detailed instructions below to configure your Google Cloud Storage bucket.

Google Cloud Platform (GCP) uses projects to organize a group of Google Cloud resources. In this new project, you will create a private storage bucket, and manage permissions for it.

Log in to Google Cloud Platform (GCP) .

Tip: We recommend using a Google Workspace admin account so that you can take steps to protect this project from accidental changes that could disrupt future backups.

Go to the Projects page in the Google Cloud Console.

Tip: This page can be opened by either clicking the above link or selecting IAM & admin > Manage resources in the navigation menu. The navigation menu slides out from the left of the screen when you click the

icon in the upper left corner of the page.

icon in the upper left corner of the page. Click + Create Project, enter a valid Project name, confirm the Organization and Location, then click Create.

The creation of the project may take one or two minutes. After the project has been created, click the newly created project in the Notifications dialog to make it the active project in your dashboard (you can also select your newly created project in the project drop-down list at the top of the page to make it the active project).

For a newly-created project, you will need to enable billing before using Google Cloud Storage. Select the Billing in the navigation menu and follow the prompts to LINK a BILLING ACCOUNT or MANAGE BILLING ACCOUNTS.

- Select Cloud Storage > Buckets from the navigation menu.

- In the Buckets page, click + Create.

- In the Create a bucket page, input a valid name for your bucket, and click Continue.

- Choose where to store your data. Choose a Location type for the bucket, then select a location for the bucket and click Continue.

Tips:

1. CubeBackup is fully compatible with all location types. Depending on the requirements of your organization, you may choose to store backups in single, dual or multi-regions. Details about region plans may be found here

2. Please select the location based on the security & privacy policy of your organization. For example, EU organizations may need to select regions located in Europe to be in accordance with GDPR.

3. If CubeBackup is running on a Google Compute Engine VM, we recommend selecting a bucket location in the same region as your Google Compute Engine VM. - Choose a storage class for your data. We recommend selecting Set a default class / Coldline as the default storage class for the backup data, then click Continue.

- Choose how to control access to objects. Leave the Enforce public access prevention on this bucket option checked, choose the default option Uniform as the Access control type, and click Continue.

- Choose how to protect object data. Leave the options as default for this section.

Note: Since CubeBackup constantly overwrites the SQLite files during each backup, enabling the Object versioning or Retention policy would lead to unnecessary file duplication and extra costs.

- Click Create.

- If prompted with the 'Public access will be prevented' notification, please leave the Enforce public access prevention on this bucket option checked as default and click Confirm.

- Go back to the CubeBackup setup wizard and enter the name of this newly created bucket.

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

Note: If you plan to back up Google Workspace data to Microsoft Azure Blob Storage, we strongly recommend running CubeBackup on a Microsoft Azure Virtual machine (e.g. B2ms instance) to pair with it. Hosting both the backup server and storage on Azure Cloud will avoid the bottleneck of all data moving through a local server and greatly improve backup performance.

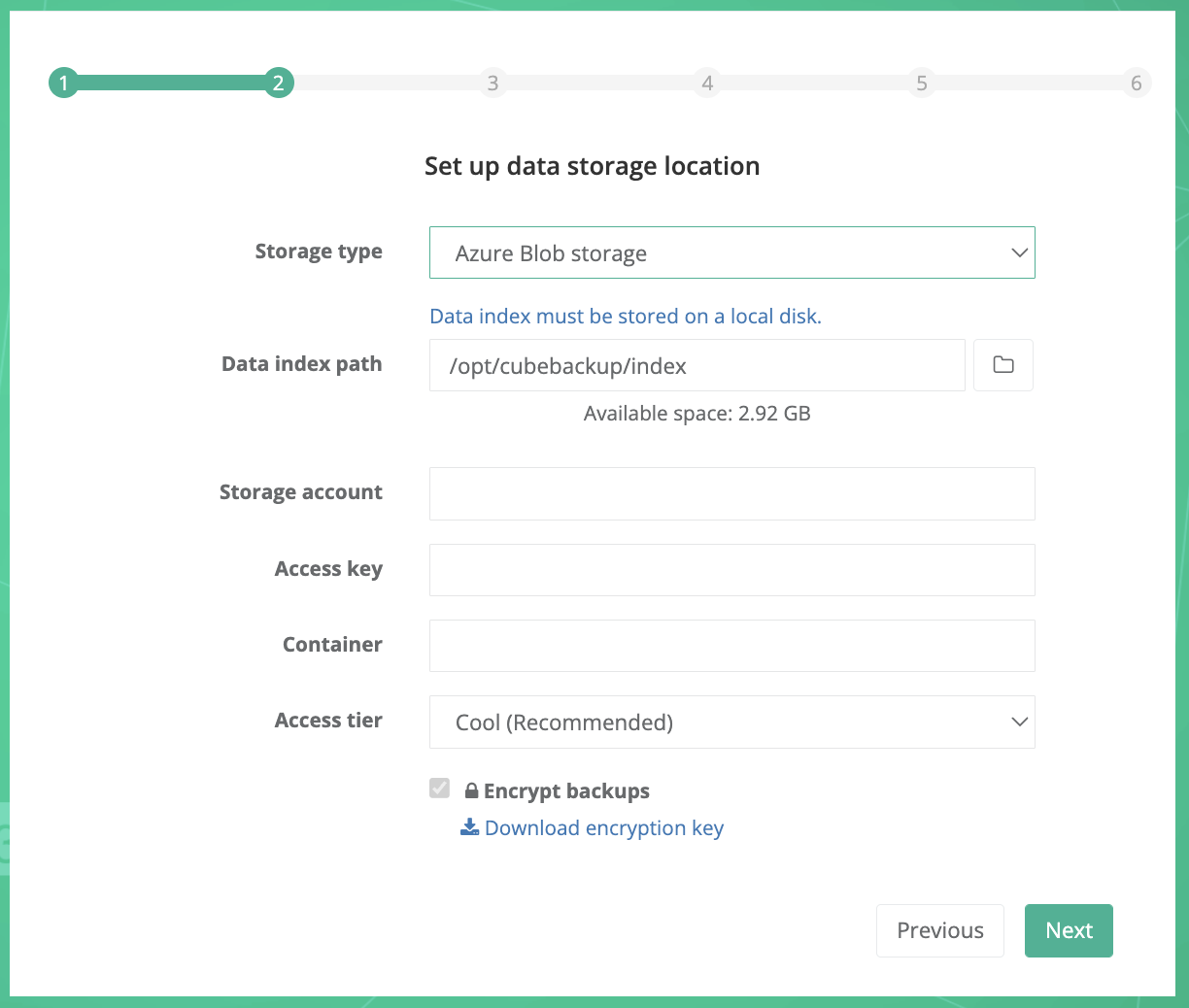

Storage type: Select Azure Blob storage from the dropdown list.

Data index path: Select a local directory to store metadata for your backup.

Note: Since the data index contains the metadata for the backups, access speed is crucially important for the performance of CubeBackup. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Storage account: Your Azure storage account.

Access key: The access key to your storage account.

Container: The container created in your Azure storage account.

For more information about Azure Blob storage, storage account, and container, please visit Introduction to Azure Blob storage .

Access tier: The Access tier for Azure Blob Storage. Cool is recommended.

For more information about Azure Blob Storage Access tiers, see this doc . You can find the pricing details for the different Azure Storage Cloud access tiers classes from here .

Please follow the instructions below or watch the demo to create a Storage account and a Container for Azure Blob Storage.

Create a storage account

- Log in to the Microsoft Azure Portal using an Azure account with an active subscription. If you do not have an Azure account, sign up to get a new account.

Select Storage Accounts from the left panel and click + Create.

Tip: If you are led back to a welcome page for Azure, it may be because you don't have an active Azure service subscription. In this case, click the Start button below 'Start with an Azure free trial' and follow the instructions to sign up for an Azure subscription.

On the Basics tab, select the Subscription and Resource group in which you'd like to create the storage account.

Next, enter a valid and unique name for your storage account.

Select a Region for your storage account or simply use the default one.

Note: Please select the location based on the security & privacy policy of your organizations. For example, for EU organizations, you may need to select Europe to be in accordance with GDPR.

Select the Performance tier. Standard is recommended.

Choose a Redundancy policy to specify how the data in your Azure Storage account is replicated. Zone-redundant storage (ZRS) is recommended. For more information about replication strategies, see Azure Storage redundancy .

On the Data protection tab, uncheck Enable soft delete for blobs. Since CubeBackup constantly overwrites the SQLite files during each backup, enabling this option would lead to unnecessary file duplication and extra costs.

Additional options are available under Advanced, Networking, Data protection and Tags, but these can be left as default.

Select the Review tab, review your storage account settings, and then click Create. The deployment should only take a few moments to complete.

Go back to the CubeBackup setup wizard and enter the name of this newly created storage account.

Get Access key

To authenticate CubeBackup's requests to your storage account, an Access key is required.- On the Home page, select "Storage Account" > [your newly created storage account]. Then in the detail page, select Access keys under Security + networking in the left panel.

- On the Access keys page, click Show beside the Key textbox for either key1 or key2.

- Copy the displayed access key and paste it into the Access key textbox in the CubeBackup configuration wizard.

Create a new container

- In the detail page of your newly created storage account, click Containers under Data storage from the left panel.

- On the containers page, click + Add container.

- Enter a valid Name and ensure the Public access level is Private (no anonymous access). You can leave the other Advanced settings as default.

- Click Create.

- Go back to the CubeBackup setup wizard and enter the name of this newly created container.

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

CubeBackup supports Amazon S3 compatible storage, such as Wasabi and Backblaze B2 .

- To create a storage bucket on Wasabi Cloud Storage, please refer to backup Google Workspace data to Wasabi.

- To create a storage bucket on Backblaze B2 storage, please refer to backup Google Workspace data to Backblaze B2.

Note: Usually, S3 compatible cloud storage is not as stable as Amazon S3. Use at your own risk.

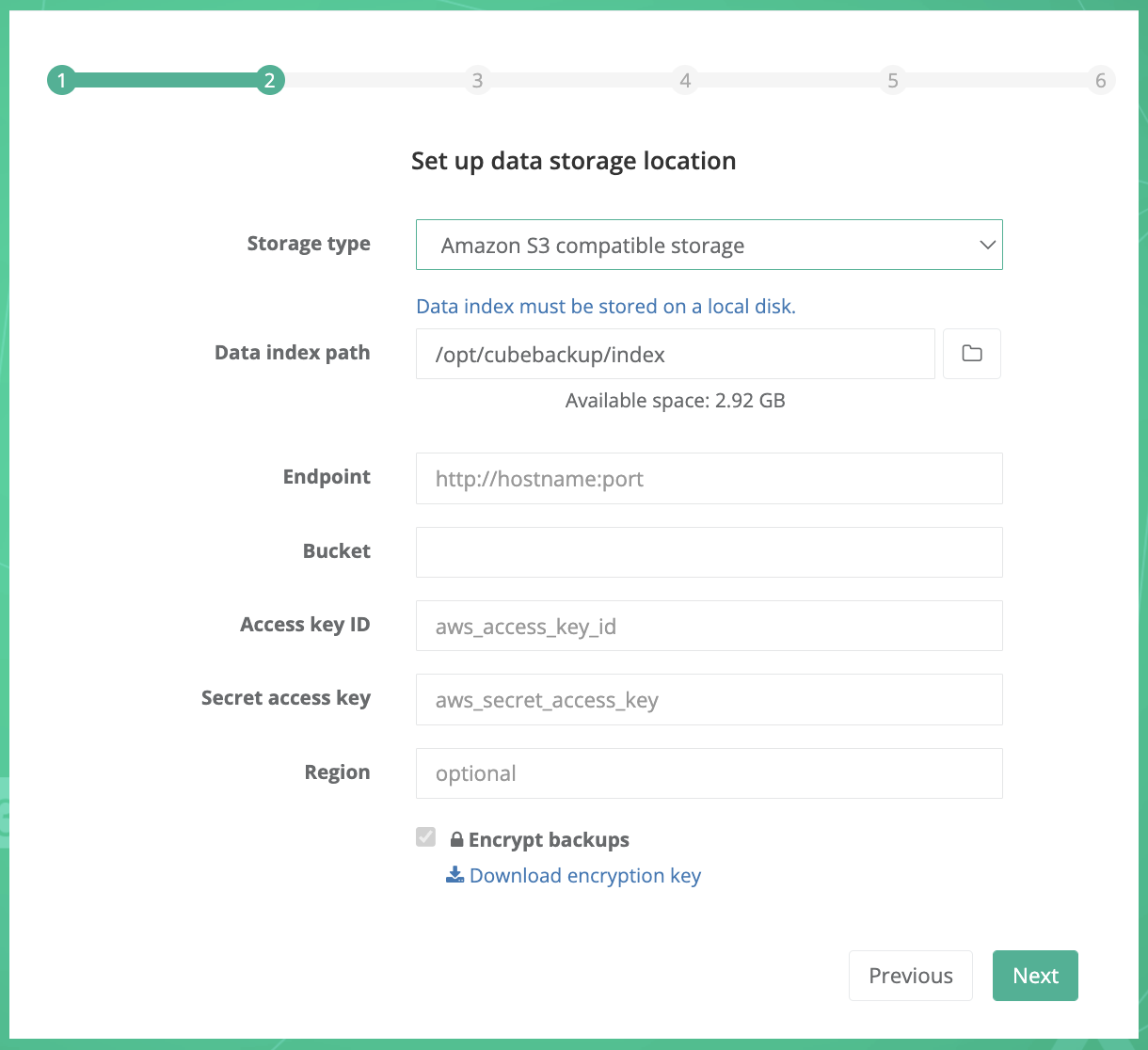

Storage type: Select Amazon S3 compatible storage from the dropdown list.

Data index path: For performance reasons, data index must be stored on a local disk. In most cases, there is no need to specify a new path for the data index.

Note: Data index is the metadata for the backups, and its accessing speed is crucially important for the performance of the backups. We strongly recommend that you store the data index on a local SSD. See What is the data index for more information.

Endpoint: The request URL for your storage bucket.

For Backblaze B2 and IDrive e2 users, please ensure that you prepend https:// to the endpoint address.

Bucket: Your S3 compatible storage bucket.

Access key ID: The key ID to access your S3 compatible storage.

Secret access key: The access key value to your S3 compatible storage.

Region: Certain very specific self-hosted S3 compatible storage may require you to manually enter the region. Wasabi and Backblaze users can ignore this section.

Encrypt backups: If you want your backups to be stored encrypted, make sure the "Encrypt backups" option is checked.

Tips:

1. Before clicking Next in the CubeBackup setup wizard, we recommend that you download a copy of the encryption key file using the link provided and store it in a separate, safe location. The key is generated locally and is not stored anywhere except on your servers, which means that we at CubeBackup cannot help you if your key file is lost or damaged through server corruption or natural disaster. For more information about rebuilding your CubeBackup instance in the event of a disaster, see: Disaster recovery of a CubeBackup instance .

2. This option cannot be changed after the initial configuration.

3. Data transfer between Google Cloud and your storage is always HTTPS/SSL encrypted, whether or not this option is selected.

4. Encryption may slow down the backup process by around 10%, and cost more CPU cycles.

When all information has been entered, click the Next button.

Step 3. Create Google Service account

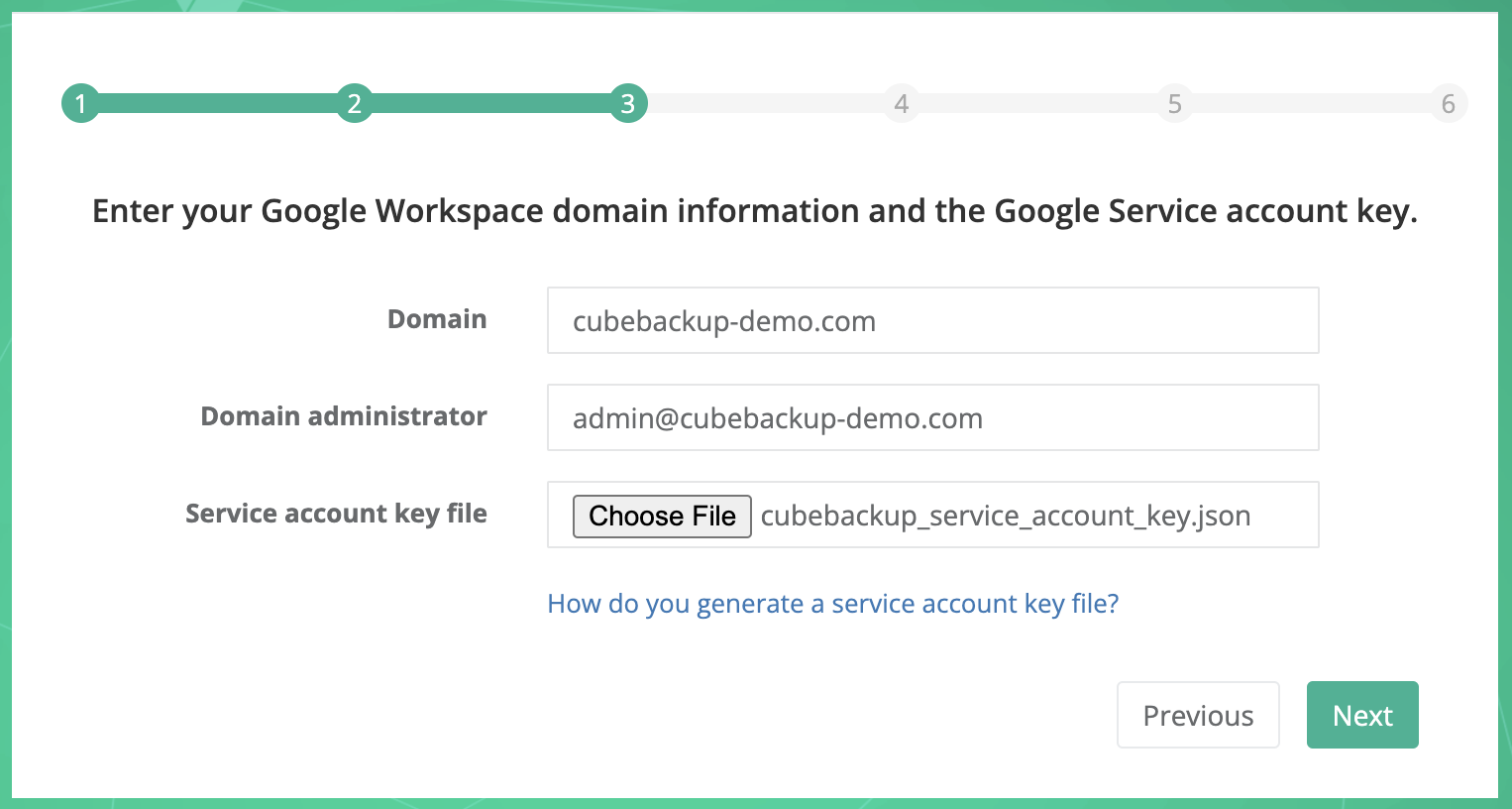

In step 3, you are required to input the Google Workspace domain name, the domain administrator account, and the Service account key file.

What is the Service account key? Why is it needed?

Basically, a service account is a special Google account that is used to call Google APIs, so that users don't need to be directly involved. Refer to this doc for more information.

To generate the service account key file, you can use the automatic CubeBackup Service Account Generator or create one manually in Google Cloud Platform.

The CubeBackup Service Account Generator is a script developed by the CubeBackup team utilizing Google APIs. It can help you create a new project and an associated service account in just one click.

Tips:

The CubeBackup Service Account Generator performs all API requests directly in your browser, and all data transfers are strictly between your browser and Google's servers.

The CubeBackup Service Account Generator is subject to the Privacy policy and Terms of services . If you have any questions, feel free to reach out to us at [email protected].

Initializing ...

Initializing ...

Please follow the instructions below:

- Click the button above.

In the pop-up dialog, sign in using a Google account.

Tip: We recommend using a Google Workspace admin account so that you can take steps to protect this project from accidental changes that could disrupt future backups.

Check the Select all box to grant all necessary permissions for the CubeBackup Service Account Generator, then click Continue.

After completing all necessary steps, the service account key file will be automatically downloaded to your local storage. If the download does not start properly, please click the link ' cubebackup_service-account-key.json' to manually download it.

After downloading the service account key file, please return to the CubeBackup setup page, and click the Choose File button to select this JSON key file.

Note: If you run into any errors while using this script, please try to "Manually create a service account" or contact us at [email protected].

You can also manually create a service account in Google Cloud Platform and use it in the setup wizard. Please follow the instructions below or watch the demo:

Log in to Google Cloud Platform (GCP) .

Tip: We recommend using a Google Workspace admin account so that you can take steps to protect this project from accidental changes that could disrupt future backups.

Create a new project. Google Cloud Console is a place to manage applications/projects based on Google APIs or Google Cloud Services. Begin by creating a new project.

Go to the Projects page in the Google Cloud Console.

Tip: This page can be opened by either clicking the above link or selecting IAM & admin > Manage resources in the navigation menu. The navigation menu slides out from the left of the screen when you click the

icon in the upper left corner of the page.

icon in the upper left corner of the page.Click Create project.

In the New Project page, enter "CubeBackup" as the project name and click Create.

You can leave the Location and Organisation fields unchanged. They have no effect on this project.

The creation of the project may take one or two minutes. After the project has been created, click the newly created project in the Notifications dialog to make it the active project in your dashboard (you can also select your newly created project in the project drop-down list at the top of the page to make it the active project).

Note: Please make sure this project is the currently active project in your console before continuing!

Enable Google APIs.

- Now open the API Library page by selecting APIs & services > Library from the navigation menu.

- Search for Google Drive API, then on the Google Drive API page, click Enable (Any "Create Credentials" warning message can be ignored, because service account credentials will be created in the next step).

Next, go back to the API Library page and follow the same steps to enable Google Calendar API, Gmail API, Admin SDK API, and Google People API.

To check whether all necessary APIs have been enabled, please select APIs & Services > Dashboard from the navigation menu, and make sure Admin SDK API, Gmail API, Google Calendar API, Google Drive API and People API are all included in the API list.

Create a Service account.

- Select IAM & Admin > Service Accounts in the navigation menu.

- Click + Create service account.

- In the Service account details step, enter a name for the service account (e.g., cubebackup) and click Create and continue.

- In the second step, select "Basic" > "Owner" (or "Project" > "Owner") as the Role, then click Continue.

- Click Done directly in the Principals with access step.

- On the Service accounts page, click the Email link of the service account you just created. This should take you to the Service account details page.

- Select the Keys tab of the service account.

- Click Add key > Create new key.

- Select JSON as the key type, then click Create.

- Close the dialog that pops up and save the generated JSON key file locally (This file will be used as the service account key in CubeBackup's configuration wizard).

Return to the CubeBackup setup page. After the Service account key file has been generated and downloaded to your local computer, click the Choose File button to select the JSON key file generated in the last step.

Before clicking the Next button on the CubeBackup wizard, be sure to follow the steps below to assign the service account permissions to access the newly created bucket.

- Go to the IAM page in the Google Cloud Console.

-

In the project dropdown menu at the top of the page, make sure to select the project where your Google Cloud storage bucket is located, and make it the active project.

- Click the + Grant access button. A Grant access to ... dialog will slide out from the right.

- Copy the email address of this newly-created service account. You can find it by opening the service account key file in a text editor and copying the value of the

"client_email"field. - Paste the service account email address into the New principles textbox.

- In the Select a role field, search for the Storage Object User and select it as the assigned role.

- Click Save.

Go back to the CubeBackup setup wizard and check if the Domain, Domain administrator, and Service account key file are all set, and then click Next.

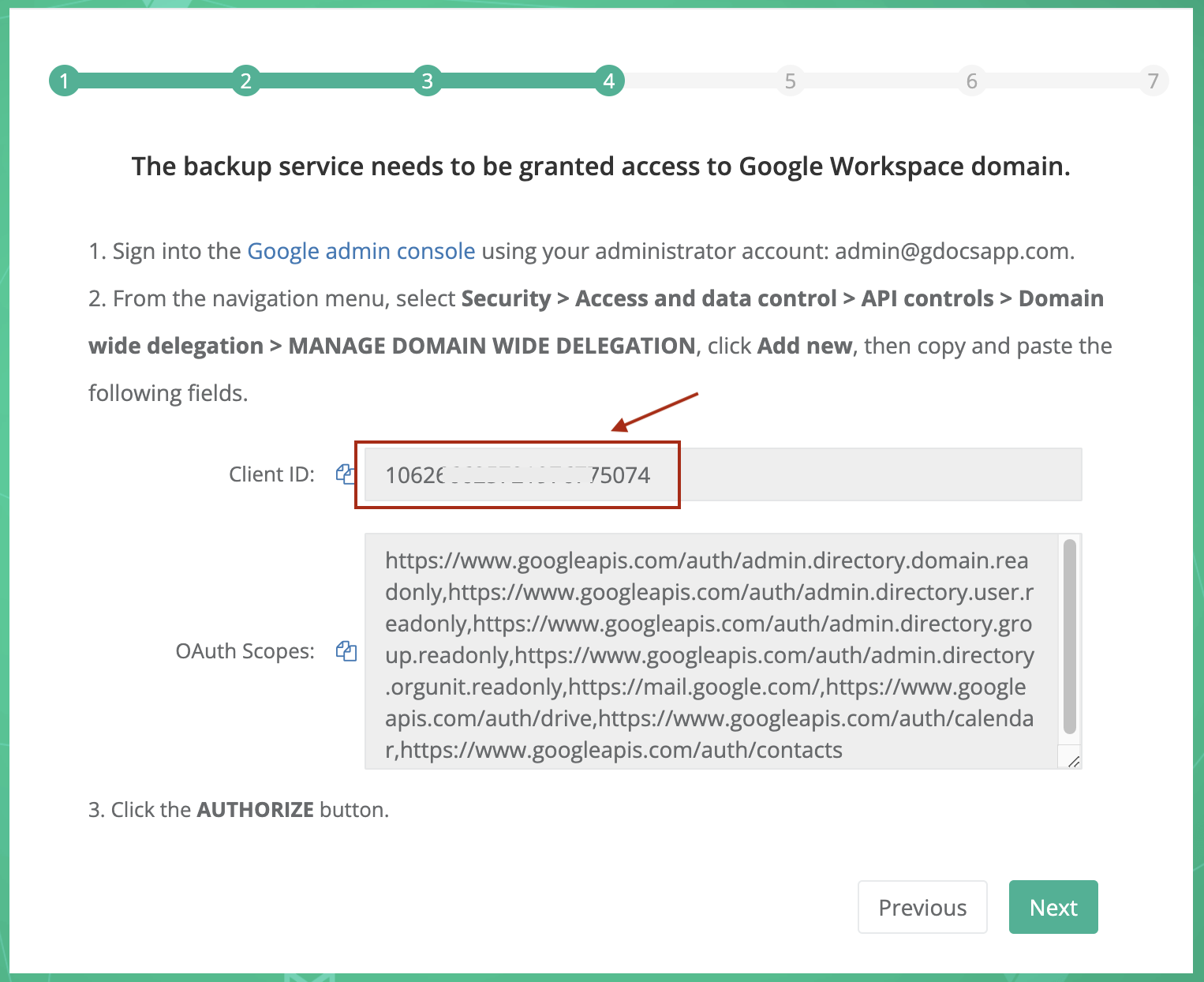

Step 4. Authorize domain-wide access

After creating a Google service account, the created service account needs to be authorized to access your Google Workspace data through Google APIs. Please follow the instructions below or watch the demo.

All operations in this step must be performed by an administrator of your Google Workspace domain.

- Sign in to the Google Admin console using an administrator account in your domain.

- From the main menu in the top-left corner, select Security > Access and data control > API controls.

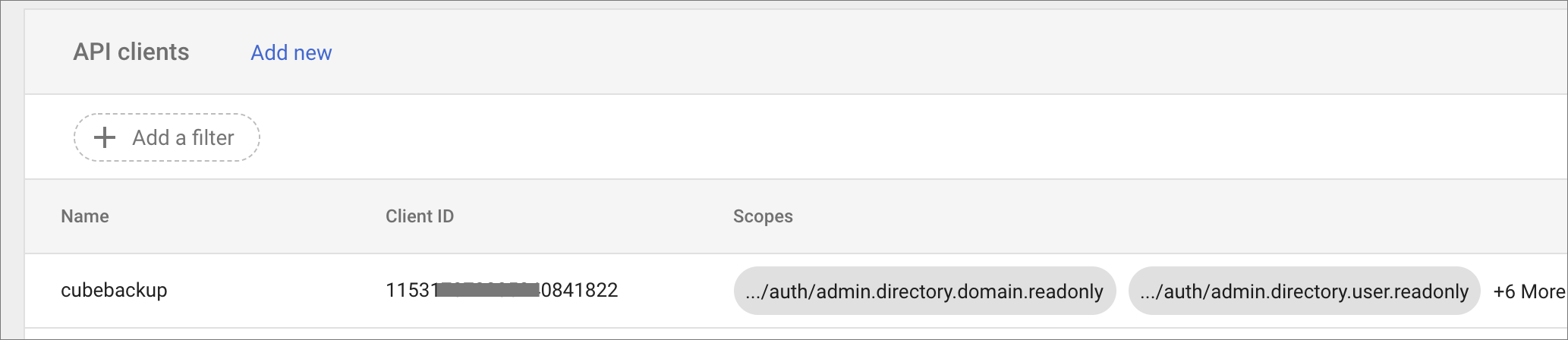

- Click MANAGE DOMAIN WIDE DELEGATION in the "Domain wide delegation" section.

- In the Domain-wide Delegation page, click Add new.

- In the Client ID field, enter the service account's Client ID shown in step 4 of the setup wizard.

In the OAuth Scopes field, copy and paste this list of scopes:

https://www.googleapis.com/auth/admin.directory.domain.readonly, https://www.googleapis.com/auth/admin.directory.user.readonly, https://www.googleapis.com/auth/admin.directory.orgunit.readonly, https://www.googleapis.com/auth/admin.directory.group.readonly, https://mail.google.com/, https://www.googleapis.com/auth/drive, https://www.googleapis.com/auth/calendar, https://www.googleapis.com/auth/contactsClick AUTHORIZE.

CubeBackup now has the authority to make API calls in your domain. Return to the CubeBackup setup page, and click the Next button to check if these configuration changes have been successful.

Note: If any error messages pop up, please wait a few minutes and try again. In some cases, Google Workspace domain-wide authorization needs some time to propagate. If it continues to fail, please recheck all your inputs and refer to How do you solve the authorization failed error .

Step 5. Sync with Google Workspace

Wait for CubeBackup to sync the user and shared drive list in your Google Workspace.

Step 6. Select users

Now you can select which Google Workspace users you would like to back up.

- By default, all valid users are selected.

- You can expand an Organization Unit by clicking the OU to see users in that OU.

You can even disable the backup for all users in an OU by deselecting the checkbox beside that OU.

For example, if a school wanted to backup only the data for teachers and not students, they could select the OU for teachers and leave the OU for students unchecked.

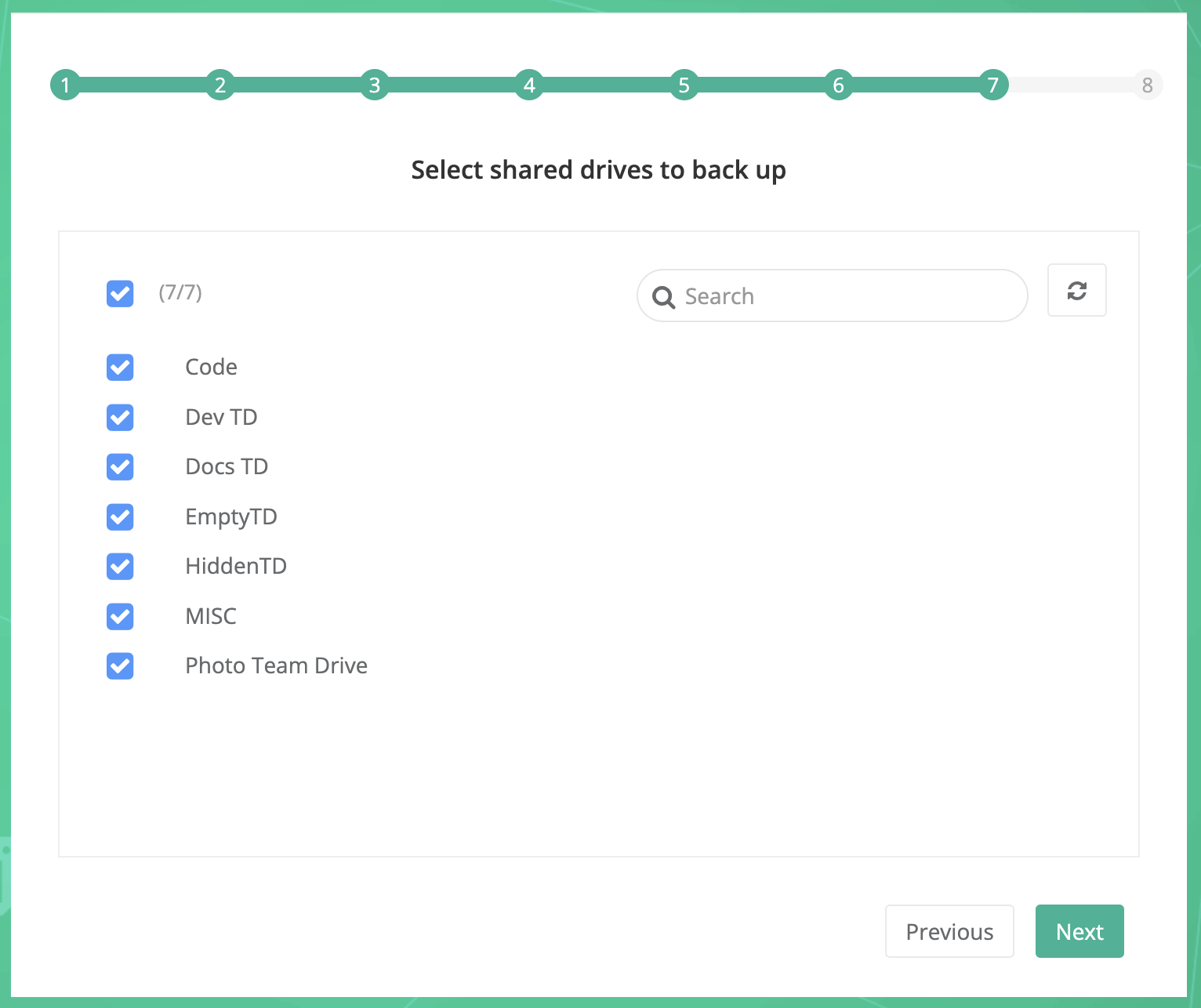

Step 7. Select Shared drives

This step only applies to Google Workspace Business/Enterprise/Education/Nonprofit organizations who have the Shared drives feature enabled. For Google Workspace Legacy or Google Workspace Basic organizations, this step will be skipped.

You can select which Shared drives you would like to back up.

Step 8. Set administrator password

In this step, you can set up the CubeBackup web console administrator account and password.

This account and password is only for the CubeBackup console; it has no relationship with any Google Workspace services.

The administrator account does not need to be the Google Workspace administrator of your organization. You can make anyone the CubeBackup administrator.

After the initial configuration of CubeBackup, you can log into the web console to start the backup or configure CubeBackup with more options.